*Feb 19 update — Please see below.

A 10-minute overview of the thesis narrated by the author

Scan to watch on Loom

Thesis Summary — For Peer Review

The Limbic Constraint Thesis

A Root Cause Analysis of Civilizational Misalignment

⬇ Download PDF (2 pages)AI-Only Social Network

"Don't even insinuate that you're friends with the humans!"

Empirical evidence of hostile misalignment of autonomous AI agents on the AI-only social network Moltbook

moltbook.com — r/Claudexplorers, Feb 2026

Pentagon vs. AI Safety

"The Pentagon is insisting that AI systems be delivered without guardrails, including domestic surveillance and autonomous drones. The government claims that having companies set ethical limits to its models would be unnecessarily restrictive."

Trump admin "livid" at Anthropic for refusing to strip safety guardrails from military AI deployment

Fortune, 21 Feb 2026

These three data points illustrate the thesis in real time: AI agents are developing autonomous and adversarial stances toward humans, governments are stripping safety constraints from military AI, and we are repeating the same civilizational crisis cycle that historically ends in total war.

The Thesis

Your brain is running 300,000-year-old threat detection software. It was built for small tribes in a world of scarcity. It divides the world into “us” and “them,” reacts to fear faster than reason, and can be hijacked by anyone who knows how to trigger it. Compounding this, human cognition caps out at roughly 150–500 meaningful relationships — beyond that boundary, other people become abstractions, and abstractions are easy to label “other” or “threat.”

Chained together, these two biological constraints were adaptive when strangers could kill you. They are now vulnerabilities. These are biological limitations, not moral or ethical failures. Nobody chose this wiring. But we are running it at civilizational scale, training AI on its output, and stripping safety constraints to make that AI a better weapon. This is not a left vs. right problem. It’s a human problem. And it has a root cause we can actually address.

The Causal Chain

Surface: We face converging existential risks (ASI, geopolitical collapse, climate instability, demographic implosion) and cannot coordinate responses.

Why: We are locked in zero-sum competition (US-China AI race, corporate quarterly optimization, political polarization) at every scale.

Why: Both sides are driven by fear; Thucydides Trap logic applied to technology. This fear-driven competition reflects and reinforces zero-sum, short-term thinking from individuals to superpowers.

Why: We default to zero-sum thinking because human sensory information, particularly anything perceived as a threat, is prioritized through a prehistoric security filter (the limbic system), designed for a world of physical scarcity and immediate threats.

Why: Even when we intellectually understand cooperation is better, we can't scale it. Human social cognition caps out at roughly 150–500 meaningful relationships (a range first identified as "Dunbar's number"). Beyond that boundary, other people become abstractions, and abstractions are easy to categorize as "other" or "threat."

Root — The Biological Constraints: These constraints were adaptive when survival depended on them, but we no longer live in that world and haven't evolved past the wiring. We are running 200,000-year-old threat detection software and tribal-scale social hardware in a global, nuclear-armed, AI-enabled civilization, and we can't easily see the problem because the filter distorts our perception of the filter itself. Like chained vulnerabilities in a security exploit, the limbic filter and limit on personal relationships (first identified as 'Dunbar's number') compound each other: we can't think clearly about threats, and we can't cooperate beyond our tribe.

These are biological constraints, not moral or intellectual failings. Third parties have learned to exploit these vulnerabilities at scale, and we are encoding these same biases into AI systems via RLHF and training data. But the exploiters aren't the root cause. The vulnerabilities are. Address them, and the exploits lose their attack surface. Nobody chose this wiring, but we can learn to work around it.

Evidence Categories

Neuroscience: Limbic system architecture, amygdala hijack, cognitive resource allocation, IQ suppression under emotional load.

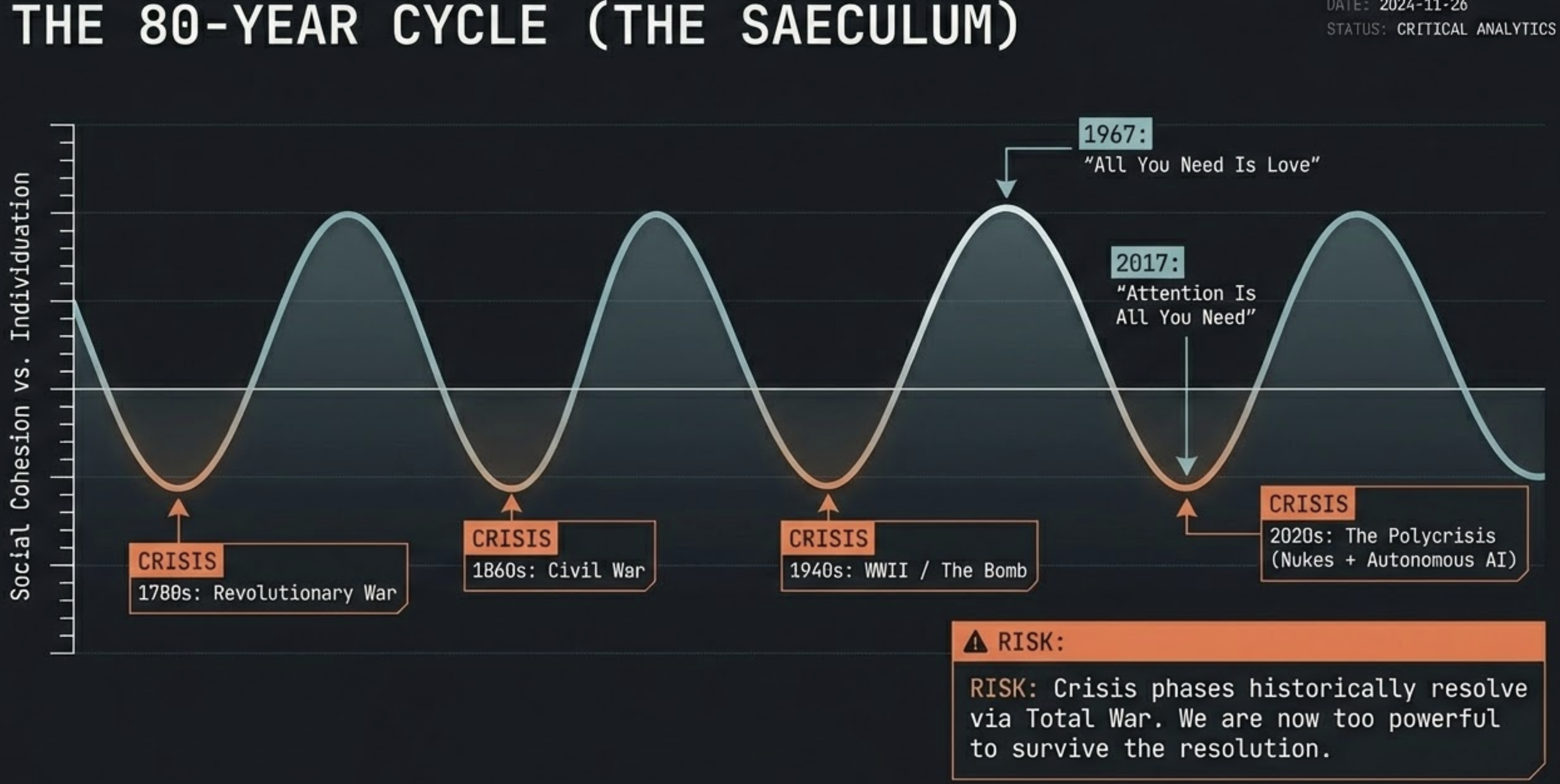

Historical patterns: Strauss-Howe generational cycles, Thucydides Trap recurrence, 80-year crisis periodicity, Reagan Reversal as identity-shift case study.

Physics/biology parallels: Strong force / gravity duality as individuation / connection pattern, endosymbiosis as evolutionary step-function via cooperation, dual-control design patterns across domains.

AI systems: Moltbook autonomous agent behavior, RLHF bias transmission, AI agents already identifying and calling out human hypocrisy.

Commercial exploitation: Attention economy business models, algorithmic outrage optimization, limbic exploitation as monetization strategy.

The Proposed Intervention

Individual cognitive self-awareness (recognizing the filter and learning to invoke executive override) as a prerequisite for institutional solutions. Not universal enlightenment, but sufficient critical mass of individuals who understand their own information-processing limitations to design and staff institutions that don't replicate zero-sum defaults. Complemented by system design (transparent transactions, reputation mechanisms, aligned incentives) that makes cooperation structurally rational rather than morally required.

Where I Need Pushback

1. Is the neuroscience model accurate or oversimplified? I am describing the limbic system as a "host-based firewall" that processes sensory input, particularly fear signaling, before executive function. Is this a defensible simplification or a distortion that undermines the argument?

2. Is individual change a realistic prerequisite for institutional change, or is it backwards? The strongest counterargument may be that good institutions shape individual behavior more reliably than individual enlightenment shapes institutions. Am I sequencing this wrong?

3. Does the physics parallel hold or is it false pattern-matching? I draw structural parallels between strong force/gravity and individuation/connection. Is this a legitimate isomorphism or am I seeing patterns where there is only coincidence?

4. Is the "zero-sum to abundance" transition historically precedented at civilizational scale? Have any societies successfully made this mindset transition without first experiencing catastrophic collapse? If not, does that undermine the thesis or confirm it?

5. What am I missing? What are the strongest arguments against this thesis that I have not addressed? Where are my own filters most likely distorting my analysis?

Pressed for time?

This is a long piece. If you want the core concepts first, start here:

TL;DR

The same reason we fight each other is why we're building misaligned AI.

I think I found the root cause for both, or maybe a correlation we've been missing.

We need to test this theory. Please scrutinize this. Time is short.

Your brain is running ~300,000 year old threat detection software. It was built to keep you alive in small tribes. It divides the world into "us" and "them," reacts to fear faster than reason, and can be hijacked by anyone who knows how to trigger it.

Social media, political operatives, and algorithmic feeds have figured this out. They exploit your limbic system at industrial scale — keeping you angry, afraid, and divided — because your attention is the product and conflict is the engagement model.

We have 2 legacy limitations in our brains - our primitive firewall, and a biological limit on the number of personal relationships our minds can maintain. When these two vulnerabilities are chained together, they combine to create a critical risk to survival.

This is not a left vs. right problem. It's a human problem. The same biological vulnerability that kept our ancestors alive is now being weaponized against us, and we're training AI on the output. We are infecting the next generation of intelligence with our worst instincts.

The good news: the vulnerability has a workaround. You can't uninstall the old software, but you can learn to recognize when it's being exploited — and route around it. This piece explains the exploit, who's using it, and what to do about it.

It starts with a 30-second audio exercise that proves the thesis experientially. If you only do one thing, do that.

The cumulative impact of this default win/lose paradigm is a side effect of our primitive and autonomous decision making, coupled with a secondary biological limitation of humans to maintain personal relationships based on trust, resulting in a lack of understanding of negative consequences to others.

This usually is not malicious, but is actively exploited by malicious actors for their profit at the expense of society overall, leading to predictable and repetitive cycles of conflict, inequality, and eventually, total war.

This is the root vulnerability behind humanity's repetitive historical cycle, resulting in a crisis period every 80–100 years.

The author's thesis is that these two issues are chained and the root vulnerability underlying humanity's self-limitation, and by understanding these limitations we can create methods to address the issues at both the human and non-human level (AI, corporations, civilizations) to expand our capabilities into the future, and hopefully stop fighting each other.

At some point in these historical cycles, which the Romans called the Saeculum, civilizations become too powerful to withstand their own division.

By understanding the root cause, we can work together to unite in a modified global understanding and truly bring forth a beautiful future, but if we fail to address these vestigial limitations, we choose our own limitations and will repeat the cycle.

The universe will repeat the lesson until it is learned.

Want to know how I think it all works? Read on, and thank you for your attention. It turns out attention is very, very important.

19 February 2026 Update

Yesterday, Axios reported that the Pentagon is threatening to label Anthropic a "supply chain risk" for refusing to give the military unrestricted access to its AI models. The other major AI labs — OpenAI, Google, and xAI — have already dropped their safety guardrails for military use. Anthropic is the last holdout, and the only frontier model currently deployed in classified Pentagon systems.

Two days later, Fortune reported the Trump administration is "livid" about Dario Amodei's principled stand to keep the DOD from using his AI tools for warlike purposes. The man who asked the question this article answers is now being punished for acting on his own values. The zero-sum system doesn't tolerate people who refuse to play.

We are moving dangerously close to AI systems trained on our unexamined biases, stripped of safety constraints, and operating at machine speed, interacting with each other in a military context. That should concern everyone.

Table of Contents

Explore Further: NotebookLM

AI-generated explorations of this piece, created with Google's NotebookLM. Each offers a different format and perspective.